Numbers

In theory there is no difference between theory and practice; in practice there is.

There's complexity in everything if you look closely enough. This is a dive into numbers, in the general, computing sense. Day-to-day, you can usually treat computer numbers as mathematical numbers, but in significant cases, you have to understand how they're different and weird and interesting. I'll write about those next - starting with Kahan summation, but for now, let's explore.

There are a few assumptions and simplifications taken in these graphics: please consult the nerd disclaimers at the bottom for a full list.

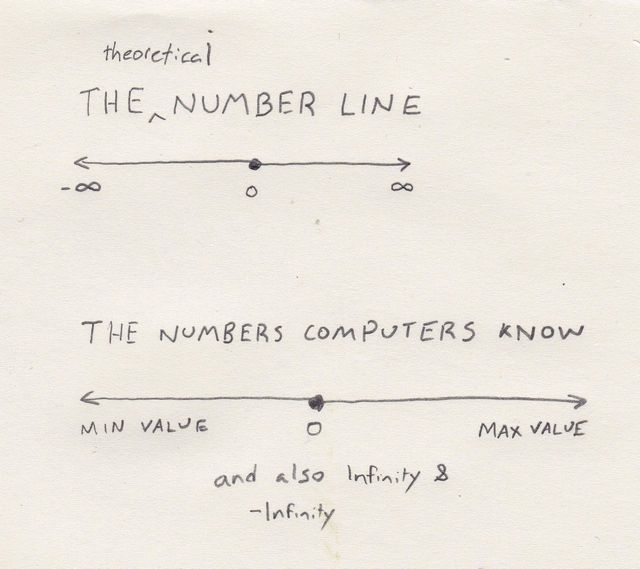

To begin with, think of a number line. In math, it's a continuous, infinite set of numbers stretching from negative to positive infinity. It's infinite, in that between any two numbers lies another number, infinitely deep. It's continuous, in that there are no gaps.

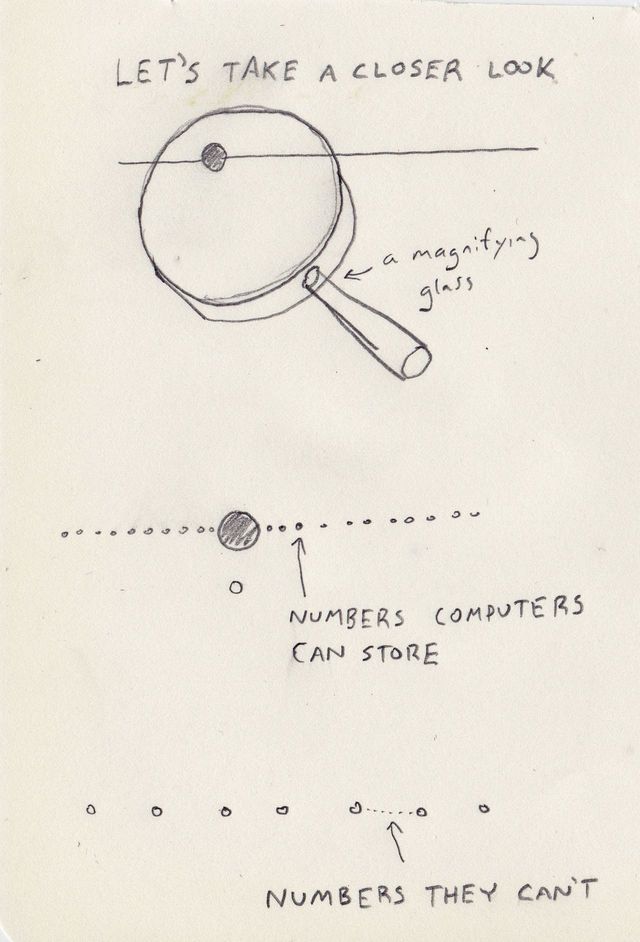

The computer number line looks similar on the surface. It doesn't go cleanly to infinity. There's usually is a representation of infinity that works like infinity does in math, but there's some 'maximum number' and a huge, some might say, infinitely large, gap between that maximum number and infinity. But the rest of the number line looks continuous, suspiciously. Let's zoom in.

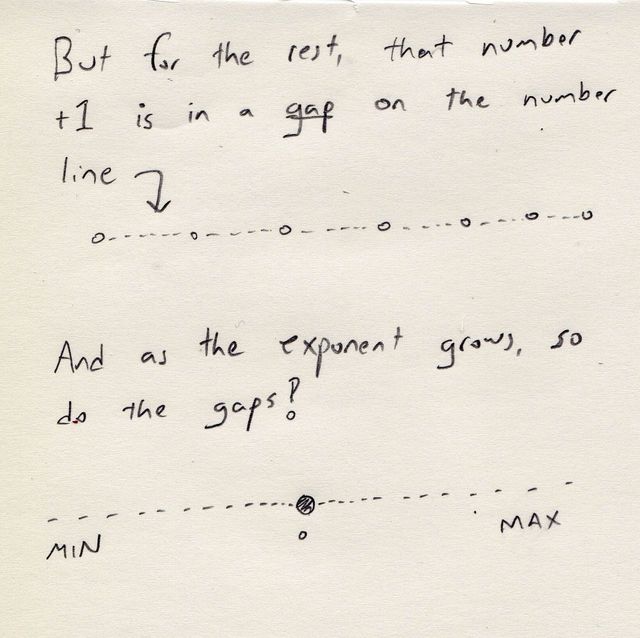

If you look closely, the line is actually a ton of points, just spaced very close together: it isn't continuous or infinite! Why would this be?

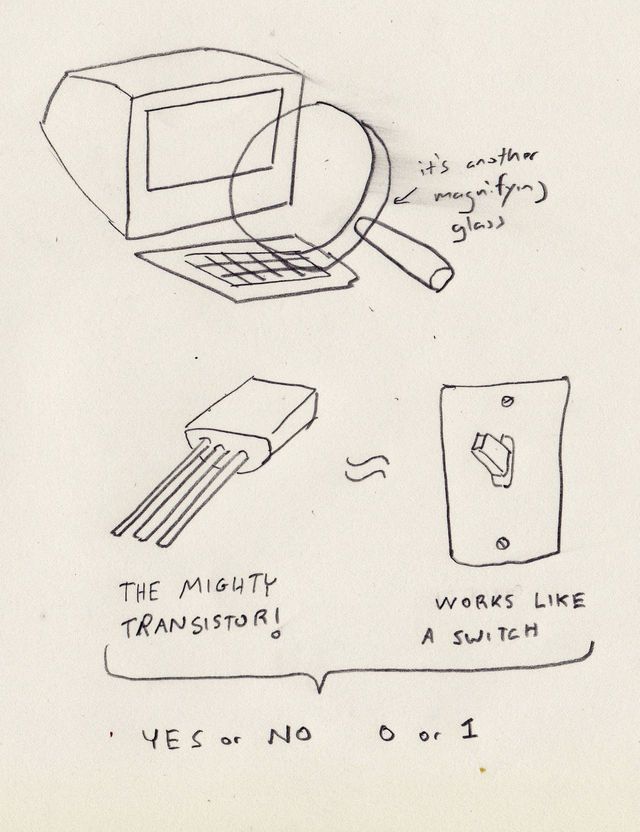

So, let's zoom in, again, to a computer. At the lowest level, they're using transistors for remembering and... computing... things. Transistors work like switches, with two positions: 0 and 1, or 'on' and 'off'.

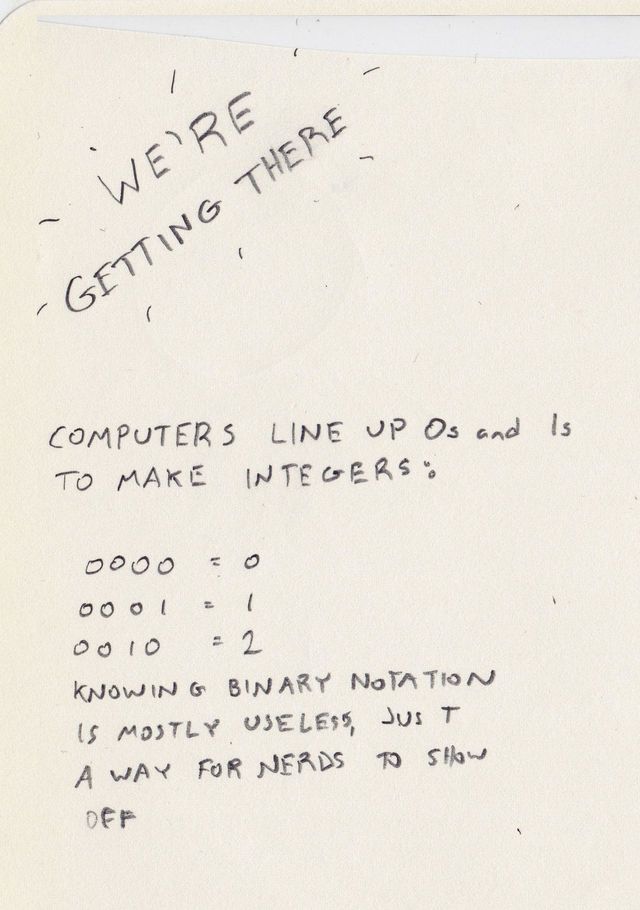

Given this barebones set of symbols - just 0 and 1, computers use abstraction to store more complicated things, like, breathtakingly, numbers that are greater than 1 or less than 0. And then they can map those numbers to more interesting things, like this blog post, hopefully, or images.

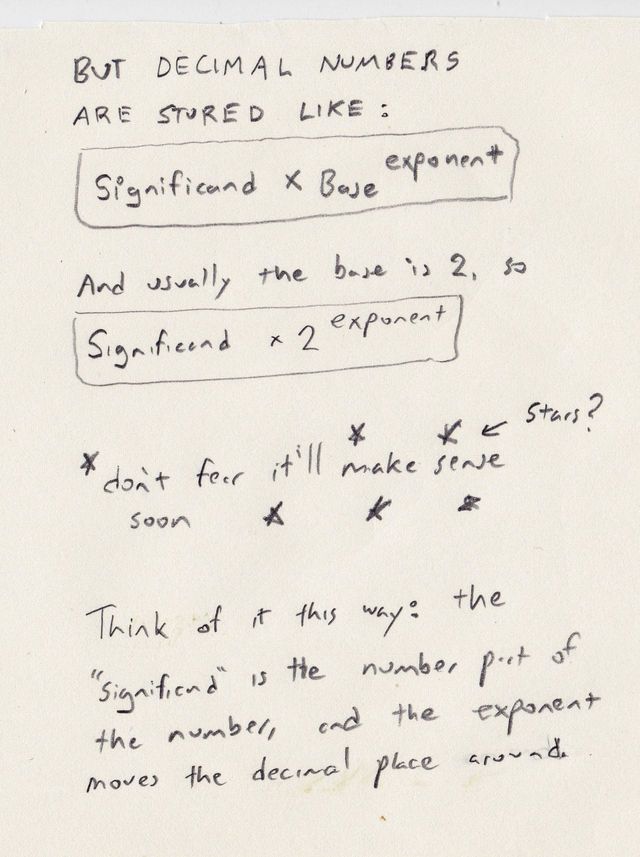

But what about decimal numbers - non-integers with decimal points and numerals after them? Well, computers use a neat system called floating-point. It uses abstraction - again - to use two integers to create each floating-point number.

Here's that equation:

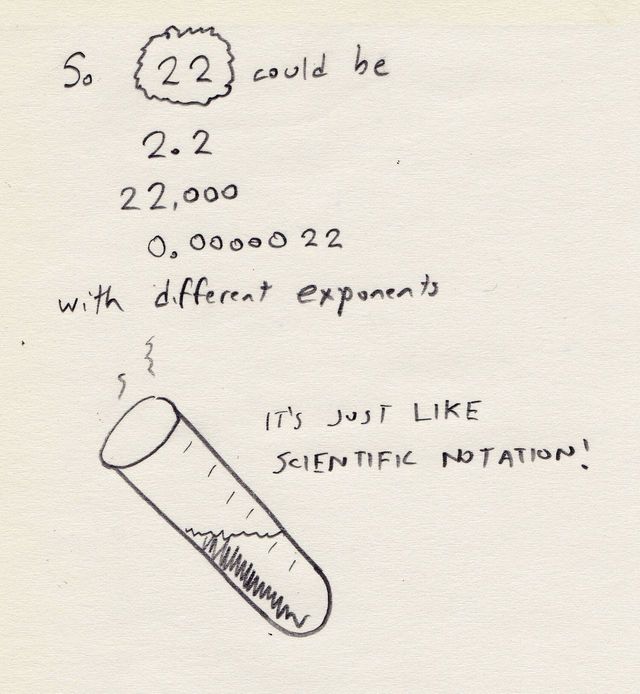

This is a really cool system! It means that people can work with both super tiny numbers and super huge numbers, just like scientific notation. Which is no coincidence: it's basically the same as scientific notation.

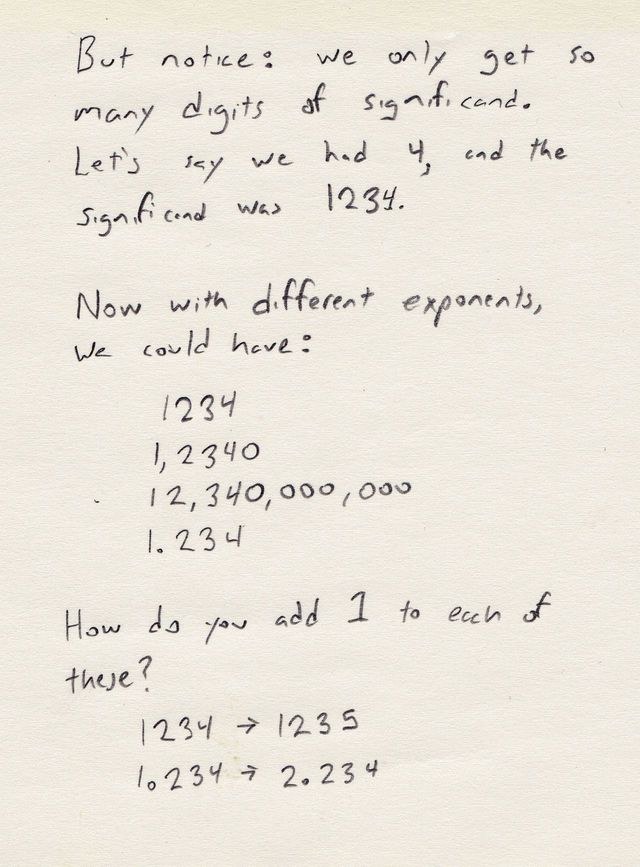

But notice that there are limits: we only have so much detail in the significant and exponent, only so many bits that we spend for them. While we can represent gigantic numbers, that doesn't mean we can represent them with perfect precision.

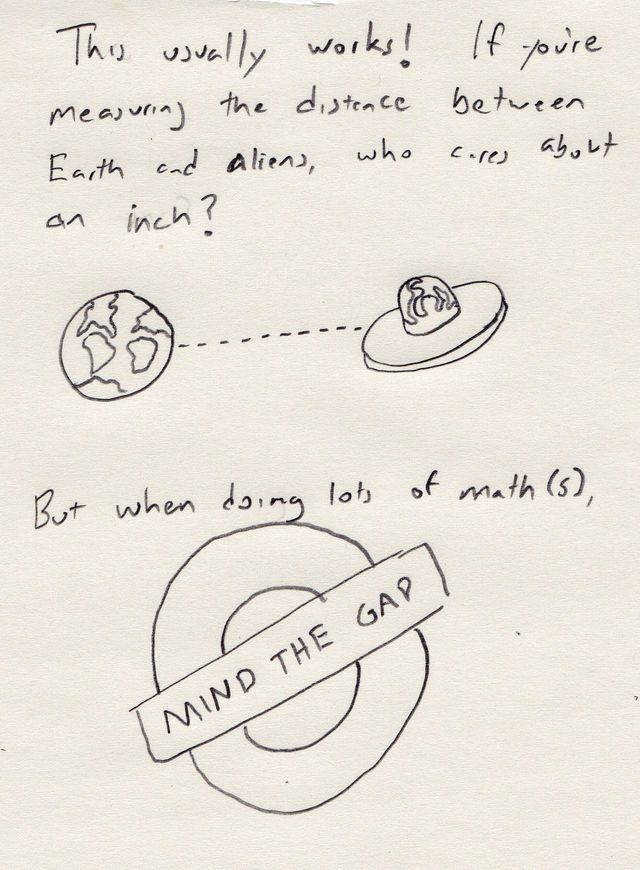

In fact, the more gigantic they get, in either the positive or negative direction, the bigger those gaps in the number line get. So not only is the number line gappy for computers, it's not consistently gappy - the gaps get bigger as you get closer to the maximum representable number.

Don't panic: computer numbers are usually good enough! Cool people like NASA rocket scientists are smart enough to know how much precision they really need, and often it isn't that much.

That said, there are places where this disconnect between theory and reality catches up with you and you have to write programs that do computer-safe math, instead of translating mathematical equations to code. Next time, we'll discuss some of them, like Kahan summation algorithm, that we use in simple-statistics.

Nerd disclaimers

- Here we're talking about IEEE floating point numbers, in the style of IEEE 754. There are fascinating alternatives, like Dec64, that we don't discuss. There are also rational data types, which are very cool, but also unmentioned, except for here.

- I'm using base 10 for demonstration. Most floating-point numbers use base-2, but 10 is much more familiar and jives well with our base-10 Arabic numerals.

- Knowing binary might be useful and being a nerd is totally cool, I'm just kidding about that part.

- In real life, we get way more than 4 digits of significand. 64-bit floating point numbers have 53 bits dedicated to the significand: that's a lot of usable numbers.

- I'm using the term 'computer' as 'digital computer'. Analog computers do exist and, being analog, can represent infinite numbers of values, at the cost of not being useful for very much and being really big and expensive.

- There are technical words for many of the things I'm discussing here, like numerical error and numerical analysis discretization. Since this is a post about learning, I try to avoid unnecessary syllables, but continue them mentioned.

- Transistors also work as amplifiers and do lots of other things. I'm only mentioning their switch-like abilities here, for simplicity.

- This blog post was written under the rule of a despicable impeachable president, and it isn't about him and doesn't mention his cheeto evil, until now. Writing about nerd stuff is a good, brief respite and hopefully reading about it is too. And then, donate to the ACLU or IRC if you liked it, to do what we can to counteract hatred.

@macwright.com on Bluesky, @tmcw@mastodon.social on Mastodon