Stumbling blocks on the way to web performance

I care about web performance – really I do. But we need to talk about something: a lot of the things we recommend for web performance are bad. The technology is unfinished, the implementations are rickety, and overall it's just not easy to make something performant by default.

We're missing a next-generation image format

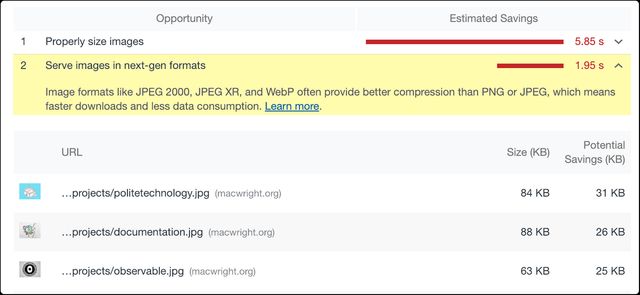

My website is perfect. But if I run Chrome's performance auditor on some of the pages, I see this:

Next-gen formats: that sounds good! And three choices.

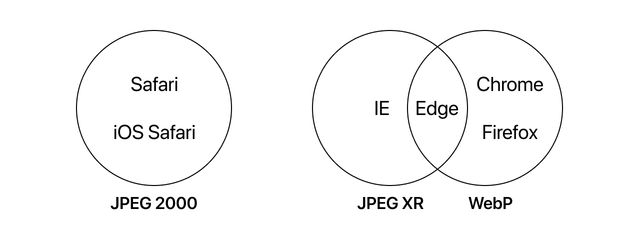

Why are there three choices? Let's run a quick venn diagram of the browser support for those formats:

This chart is more optimistic than it was a few months ago, because Edge started supporting WebP right before it hit the end of the line. But it's still pretty dire, especially because Firefox only gained WebP support in version 65, which has 0.05% market share, a tiny fraction of, say, version 64, which has 1.66% share.

So if you want to avoid the failure case of 'blank images for anyone using an iPhone', you'll need to use a picture element and keep two or more copies of every image.

So I supposed Chrome is suggesting you literally support next-gen image formats: at least 2 or three of them, per image. And then, of course, versions of those images for high-dpi displays. So maybe… 5 images per image?

Sure, you can use a service like imgix to generate these images, but do we really need a software-as-a-service with accompanying pricing just to show a picture?

Image lazy-loading is terrible

So: lazy-loading is the technique in which you pay attention to the part of the page that a user can see, so you can load just the images in view. If the page is long and has lots of images 'below the fold' - out of initial view - then you avoid loading those images until they're visible.

Sounds like a good idea, right? Well, a lot of folks will recommend lazy-loading. Unfortunately, it's terrible.

Every single approach to image lazy loading makes JavaScript a prerequisite for seeing images. Take the most popular image lazy loading solution on GitHub: layzr.js (5,555 stars as of this writing) Here's its demo, with JavaScript disabled:

There are supposed to be images in that blank spot. This isn't the fault of the maintainer, or any of the other maintainers: without a browser standard for image lazy loading, it simply isn't possible to do without sacrificing degradability. With a lazy-loading library, your image elements might look like:

<img data-normal="normal.jpg">

<!-- or perhaps… -->

<img class="lozad" data-src="image.png" />There are lots of variations, none of them remotely semantic or clean. Tools like Instapaper, that naïvely expect images to be images, need to jump through hoops to attempt to detect lazy-loaded images. Tools like archive.org and archive.is, which attempt to detect image references, have mixed luck figuring out how to download images.

The good news: there's a proposal to implement native lazyload. Unfortunately, it'll be a minute until it's implemented everywhere, because it hasn't been implemented in shipping Chrome yet.

Edit: Ryan Barrett informs me on Twitter that you can add a fallback image in a noscript tag. That’s clever, and reportedly what WordPress does.

Service workers are usually overkill

It's good to aggressively cache resources. It's also cool to support offline websites. Doing so with service workers, though, is almost guaranteed to be overengineering. The service worker specification, mostly Google-authored, is massive. So massive that Google themselves constantly route developers to Workbox a 'set of libraries' meant to abstract around the spec. Workbox certainly simplifies service workers… a little: but it still leaves you with 14 libraries and 3 modules. And, most critically, it all puts the responsibility on you to implement cache invalidation, one of the two hardest problems in Computer Science – making it easy to accidentally retain private information from earlier sessions.

But, nonetheless, Lighthouse earnestly recommends you register a service worker to make your website a fully performant Progressive Web App - after all, it's under the Exemplary checklist. There are certainly apps that could take advantage of Service Workers, but I think it's at least a good example of a spec that doesn't scale down properly – adding Service Workers to optimize a smaller project always feels like overkill.

Making fast websites shouldn't be rocket science, and shouldn't come with brutal tradeoffs. Once a few of these specifications land, and if browser vendors decide to agree on formats, I think things will get a lot better.

@macwright.com on Bluesky, @tmcw@mastodon.social on Mastodon